If you’ve ever run a database package (.dacpac) publish against a SQL Server or Azure SQL database, chances are good that you have run into the following error when changing the schema for a table that contains data:

SQL72014: .Net SqlClient Data Provider: Msg 50000, Level 16, State 127, Line 6 Rows were detected.

The schema update is terminating because data loss might occur.In this post we will take a look at a common cause for this error and how we can use the ‘Generate smart defaults when applicable‘ publishing option to solve it.

Setup a test environment

The usual cause for this error is that your schema change publish might break the table constraints if data is present in the table. It’s easier to visualize this if we trigger the issue on purpose in a test environment.

- Create a new empty database in SQL Server or Azure SQL Server.

- Create a new database project in Visual Studio (you can use the free edition for this).

- Create a single table with the following schema. Note that the test1 column allows null.

CREATE TABLE dbo.MyTable

(

[ID] INT NOT NULL PRIMARY KEY,

[Name] NVARCHAR(256) NOT NULL,

[Test1] NVARCHAR(128) NULL

)After creating the SQL Server project we need to publish it and add some data that could violate constraints on a future schema change.

- In Visual Studio, right click on the database project to build it, and then publish it to your target SQL Server or Azure SQL instance.

- Connect to the database engine with SQL Server Management Studio and then insert some test data. Note that some values for the test1 column are null, and some contain real data.

INSERT INTO dbo.MyTable VALUES ( 1, 'Rita', 'test column data' )

INSERT INTO dbo.MyTable VALUES ( 2, 'Carl', 'more test column data' )

INSERT INTO dbo.MyTable VALUES ( 3, 'Molly', NULL )

INSERT INTO dbo.MyTable VALUES ( 4, 'Lee', NULL )

INSERT INTO dbo.MyTable VALUES ( 5, 'Brad', 'even more test column data' )A query of the data should look something like this:

Trigger the error condition

Let’s assume we now have a requirement to change the Test1 column from NULL to NOT NULL. If the table is empty, that wouldn’t be a problem and the publish would succeed. However since our table is populated with data that breaks the constraint (data cannot be null), deploying that requires special handling.

Start by making the schema change (which also includes a DEFAULT constraint) in the Visual Studio database project:

CREATE TABLE dbo.MyTable

(

[ID] INT NOT NULL PRIMARY KEY,

[Name] NVARCHAR(256) NOT NULL,

[Test1] NVARCHAR(128) NOT NULL DEFAULT ''

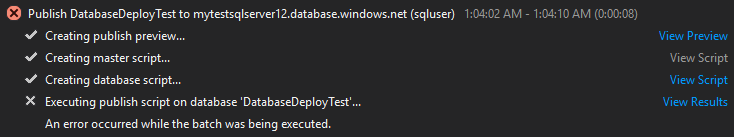

)Attempting to run this publish will fail, even though we provided the default constraint:

Clicking on the ‘view results’ link will show it failed with the ‘data loss might occur’ error message. Clicking on the ‘view script’ link provides more context into what happened:

Some observations from the auto-generated publishing script:

- The ‘data loss might occur’ error message can be see on line 44. You can see that SQL throws this error if there is ANY data in the table, not just data that would violate the constraint.

- The DEFAULT constraint isn’t added until after the ALTER TABLE statement. This means it won’t help us with the data already in the table, it would only help for rows inserted in the future.

The ‘block incremental deployment if data loss might occur’ option

Attempting to fix the issue by unchecking the ‘block incremental deployment if data loss might occur‘ publish option doesn’t fix the problem for this specific scenario.

The reason for this is that some rows of data in our table violate the constraint and this causes the ALTER TABLE statement to fail. Run the publish and you can see exactly that:

SQL72014: .Net SqlClient Data Provider: Msg 515, Level 16, State 2, Line 1

Cannot insert the value NULL into column 'Test1', table 'DatabaseDeployTest.dbo.MyTable';

column does not allow nulls. UPDATE fails.IMPORTANT NOTE: If there is existing data in the table but no rows will violate the newly added constraint, then unchecking the ‘block incremental deployment if data loss might occur’ option will result in a successful publish.

Solution: Generate Smart Defaults

If some of the data in the table does violate the new schema constraints (as in our example), then we can use the ‘Generate smart defaults, when applicable‘ option– it isn’t checked by default we so we need to enable this publishing option:

If we click on the ‘Generate Script’ button after checking smart defaults, we can get a non-destructive preview of what the script will do:

Here is the publish script preview. Notice that before the ALTER TABLE statement, it runs an UPDATE statement that applies a safe default (empty string) to any values in that table that violate the NOT NULL constraint.

Running the publish again with this option enabled now results in a successful publish:

Re-running the SELECT query on the table now shows our data has had the safe default applied for us, and the column definition has indeed changed from NULL to NOT NULL.

What are the smart defaults for each datatype?

You can reference the following table for the defaults for each SQL datatype. From source: https://stackoverflow.com/a/19433730.

| SQL Datatype | Smart Default Value |

| bigint | 0 |

| binary | 0x00 |

| bit | 0 |

| char | <empty string> |

| date | 1900-01-01 |

| datetime | 1900-01-01 00:00:00.000 |

| datetime2 | 1900-01-01 00:00:00.0000000 |

| datetimeoffset | 1900-01-01 00:00:00.0000000 +00:00 |

| decimal | 0 |

| float | 0 |

| hierarchyid | <not available> |

| image | 0x |

| int | 0 |

| money | 0.00 |

| nchar | <empty string> |

| ntext | <empty string> |

| numeric | 0 |

| nvarchar | <empty string> |

| real | 0 |

| smalldatetime | 1900-01-01 00:00:00 |

| smallint | 0 |

| smallmoney | 0.00 |

| sql_variant | <not available> |

| text | <empty string> |

| time | 00:00:00.0000000 |

| timestamp | <not available> |

| tinyint | 0 |

| uniqueidentifier | 00000000-0000-0000-0000-000000000000 |

| varbinary | 0x |

| varchar | <empty string> |

| xml | <empty string> |